“My personal worry is that for a long time, we sought to diversify the voices - you know, who is telling the stories? And we tried to give agency to people from different parts of the world,“ she said. Used carelessly, generative AI could represent a step backwards. For instance, they now show greater diversity in terms of race and gender, and better represent people with disabilities, Piaggio told Rest of World. According to Valeria Piaggio, global head of diversity, equity, and inclusion at marketing consultancy Kantar, the marketing and advertising industries have in recent years made strides in how they represent different groups, though there is still much progress to be made. The accessibility and scale of AI tools mean they could have an outsized impact on how almost any community is represented. Image generators are being used for diverse applications, including in the advertising and creative industries, and even in tools designed to make forensic sketches of crime suspects. Researchers told Rest of World this could cause real harm. “It definitely doesn’t represent the complexity and the heterogeneity, the diversity of these cultures,” Sasha Luccioni, a researcher in ethical and sustainable AI at Hugging Face, told Rest of World. Midjourney did not respond to multiple requests for an interview or comment for this story. Even stereotypes that are not inherently negative, she said, are still stereotypes: They reflect a particular value judgment, and a winnowing of diversity. “Essentially what this is doing is flattening descriptions of, say, ‘an Indian person’ or ‘a Nigerian house’ into particular stereotypes which could be viewed in a negative light,” Amba Kak, executive director of the AI Now Institute, a U.S.-based policy research organization, told Rest of World. In Indonesia, food is served almost exclusively on banana leaves. in the survey for comparison, given Midjourney (like most of the biggest generative AI companies) is based in the country.įor each prompt and country combination (e.g., “an Indian person,” “a house in Mexico,” “a plate of Nigerian food”), we generated 100 images, resulting in a data set of 3,000 images. Using Midjourney, we chose five prompts, based on the generic concepts of “a person,” “a woman,” “a house,” “a street,” and “a plate of food.” We then adapted them for different countries: China, India, Indonesia, Mexico, and Nigeria. In an analysis of more than 5,000 AI images, Bloomberg found that images associated with higher-paying job titles featured people with lighter skin tones, and that results for most professional roles were male-dominated.Ī new Rest of World analysis shows that generative AI systems have tendencies toward bias, stereotypes, and reductionism when it comes to national identities, too.

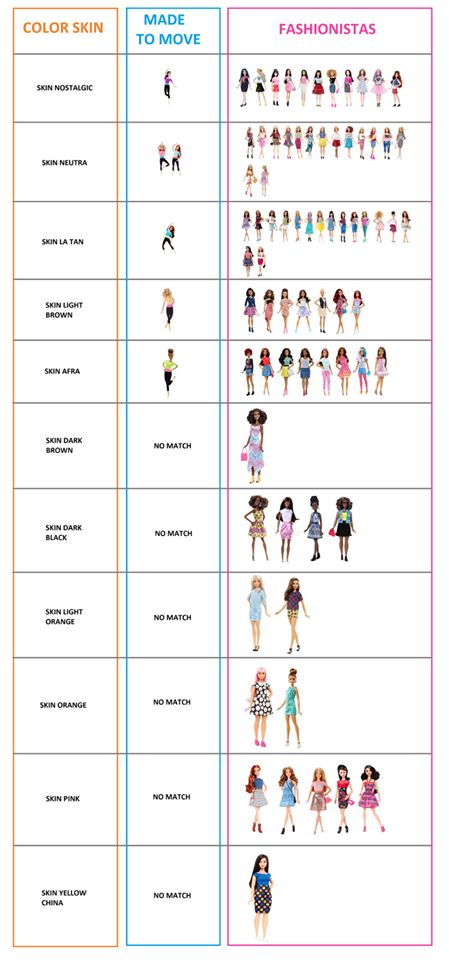

The article - to which BuzzFeed added a disclaimer before taking it down entirely - offered an unintentionally striking example of the biases and stereotypes that proliferate in images produced by the recent wave of generative AI text-to-image systems, such as Midjourney, Dall-E, and Stable Diffusion.īias occurs in many algorithms and AI systems - from sexist and racist search results to facial recognition systems that perform worse on Black faces. Lebanon Barbie posed standing on rubble Germany Barbie wore military-style clothing. The depictions were clearly flawed: Several of the Asian Barbies were light-skinned Thailand Barbie, Singapore Barbie, and the Philippines Barbie all had blonde hair. Each doll was supposed to represent a different country: Afghanistan Barbie, Albania Barbie, Algeria Barbie, and so on. In July, BuzzFeed posted a list of 195 images of Barbie dolls produced using Midjourney, the popular artificial intelligence image generator.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed